Ultra Scalable Ethereum

Ethereum has the best scalability strategy of any blockchain. Full stop.

Does that sound crazy? That’s ok. We’ve been called crazy before 😘

When crypto was in the bear market and no one believed in ETH, we were the ones shouting ETH is money. We modeled the idea of ETH as a triple point asset when the crypto world thought ETH was a useless utility coin. This was when EIP 1559 was just a concept, PoS was years away, and ETH was trading near $150.

Contrarian ideas always sound crazy at first.

But now institutions are referencing the triple point asset thesis in their research as their bull case for Ethereum. Ultra Sound Money is winning.

We believe Ethereum scalability is as underrated now as ETH the asset was in 2019.

Modular blockchains are going to change everything about this industry.

Short-term narratives come and go. But fundamental technical and economic changes like modular blockchains are worth paying attention to. They’re worth investing in. They’re worth holding for the long term.

Ultra Sound Money meets Ultra Scalable Ethereum. 🤝

Strap in—this is essential reading. (Here’s the podcast version)

David explains.

- RSA

Ethereum development is reaching a new level of maturity. The gap between where Ethereum currently stands and its defined roadmap is rapidly shrinking.

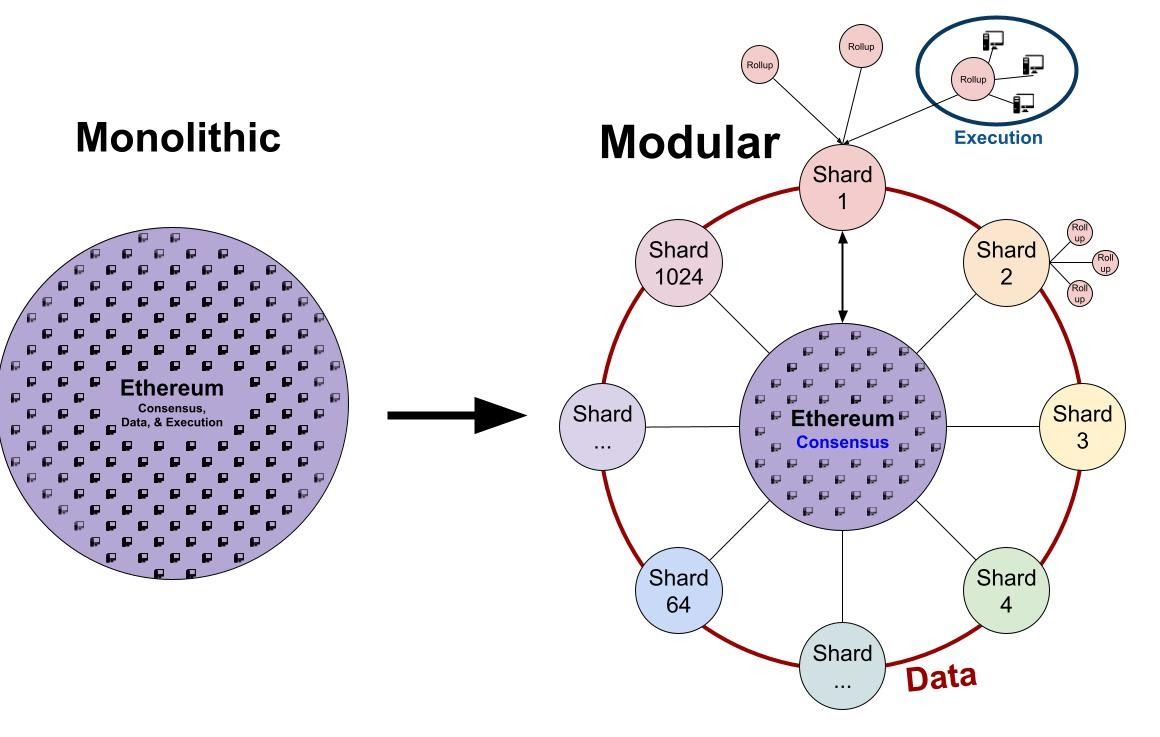

Now that we’re at this phase, it’s become clear that Ethereum is developing a modular design architecture. The properties that make a blockchain ‘a blockchain’ are being differentiated and compartmentalized in order to allow each to become individually maximized.

In this article, we’ll explore how Proof of Stake, sharding, and rollups enabled a modular blockchain design that actualizes the long-term vision of Ethereum and set the standard for blockchains going forward.

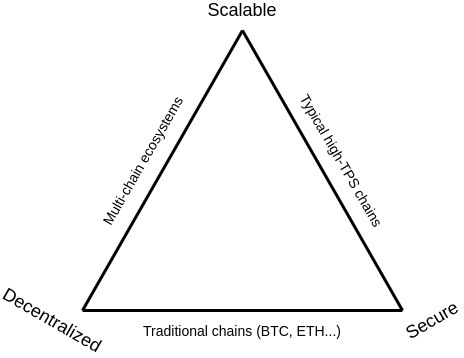

The Blockchain Trilemma

The infamous Blockchain Trilemma dictates that you can only optimize for two of the three properties of a blockchain. One must be sacrificed due to technical limitations. Those three properties of a blockchain are:

- Scalability - What’s the data throughput of the system? How many TPS?

- Decentralization - How many nodes are there? Where are the centers of powers?

- Security - How difficult is it to attack?

So why is this the case? Why can’t blockchains achieve sufficient decentralization, security, and scalability in one go?

Because blockchains are monolithic. They try to achieve all three goals on the mainchain. When you modularize these components, however, the limitations of the blockchain trilemma disappear.

As an analogy, think about the division of labor. This economic principle partitions a complex task into smaller components that individuals can specialize in, allowing the entire system to produce far more output than the same number of workers, each working alone.

So what does a modular blockchain look like and how does it work? Before we get there, we need to understand three components of a blockchain that make up the three properties described above.

Components of a Blockchain

Decentralization, scalability, and security are all properties of a blockchain. They are traits a blockchain can embody, but there are underlying components that enable these properties. Modular blockchains divide these components into separate parts and maximize them. So what are they?

- Consensus - Provides security and defines the canonical truth about data stored on the blockchain. What block number are we on? What are the contents of block ‘N’?

- Execution - The computation required to update the blockchain from N to N+1. Taking the old state, add a bunch of transactions, and then transition to the new state.

- Data Availability - Data that the L1 guarantees are available to be referenced. All the data that makes up ‘N’.

Before diving deeper, let’s get familiar with these terms using an analogy. It’s a Wednesday morning and you’re headed to your local Wells Fargo branch office to deposit a check for $100.

- The state of your account is your bank balance, which is sitting at a healthy $69,420.

- All the previous account transactions from inception until now are contained in the data availability layer, a centralized database hosted and secured by Wells Fargo.

- When the bank teller processes your check, Wells Fargo executes a state transition against the data availability layer to update your balance to $69,520.

- The “N+1” state ($69,520) is now reflected on your Wells Fargo mobile app, web app, and other branch locations. There is consensus because all updates take place on a centralized database that only people with the right credentials can access.

Now, in blockchain terms:

Consensus

Consensus defines the canonical truth about data stored on the blockchain.

In these categories, we find Proof of Work and Proof of Stake. These are the systems that define how blocks are added to the chain and how participants can agree that the blocks are correct.

With this, blockchains can progress forward in time without fracturing into a million different chains, each with its own version of what is true.

Execution

The execution property of blockchains is where the state of a blockchain is carried forward into the next block.

Block N has some specific state, representing how data has changed from Block N-1 (account balances, contract code, etc.) Validators then grab a bunch of transactions from the mempool, and create state updates to Block N by producing Block N+1, which has taken the state of Block N and changed it according to the transactions that have been pulled from the mempool (The mempool is like the number of people waiting in line for the bank teller.).

Transactions are executed when a validator computes the new state of the next block using the selected mempool transactions as inputs along with the consensus mechanisms.

Data Availability

Data availability refers to the data hosted on each blockchain node. If there’s data on the nodes, it is available to anyone and everyone using that blockchain; there are no dependencies on this data. It’s available. Full stop.

This also makes this data very precious and expensive. There is an extremely limited amount of this data available (we call it blockspace!). When you add some bit of data to a blockchain, you’re adding that data to all computers running the nodes for the chain, now and forever. The purposes of blockchains are to be immutable; which means that the data held inside of these systems are quite literally the most valuable kind of data ever created by humanity.

Everyone wants their data (transactions) to be immutable, so people bid very high prices to access these properties, which is why we see very high gas prices on the Ethereum L1.

Monolithic Blockchains

Monolithic Blockchains are blockchains that try to do all three things, consensus, execution, and data availability, all in the same spot: on the L1. Basically, most blockchains to date, including Ethereum as it currently stands, is a monolithic blockchain.

The problem with monolithic blockchains is that they are subject to the blockchain trilemma. Because the same layer is responsible for all three components of what makes a blockchain ‘a blockchain’, optimizing for one property of a blockchain is constraining for the others.

- Want more blockspace available by making faster block-times and bigger blocks? Then decrease the number of nodes that can keep up with the chain’s rate of progress. That way, the slow computers of the world won’t hold back the speed of the chain.

- Want fast transactions? Reduce the number of nodes so fewer total computers actually need to do the computation. That way, we don’t have a bunch of redundant computers doing all the same computation; we’ll just trust that the few ones that do the hard work of the computation aren’t lying to the network.

- Want to optimize for security and decentralization? Decrease the supply of block space and reduce node hardware requirements, so that everyone can be a participant in the network, but expect your transactions to take much longer to clear.

Monolithic Blockchains have gotten us this far, but they are now hitting the limits to scale.

The era of monolithic blockchains is coming to an end.

The era of modular blockchains is upon us.

Modular Blockchains

Modular blockchains take the three components that monolithic blockchains currently house on the L1 and compartmentalize them. Just like with the division of labor, unbundling each component allows us to optimize each and produce a far better product in which the whole is greater than the sum of its parts.

Modular Execution with Rollups

Rollups can process transactions orders of magnitude faster than the mainchain!

Rollups are unburdened from the responsibility of consensus and data availability by creating a transaction execution environment separate from Ethereum, and processing the transactions before making an update to the state of the L1.

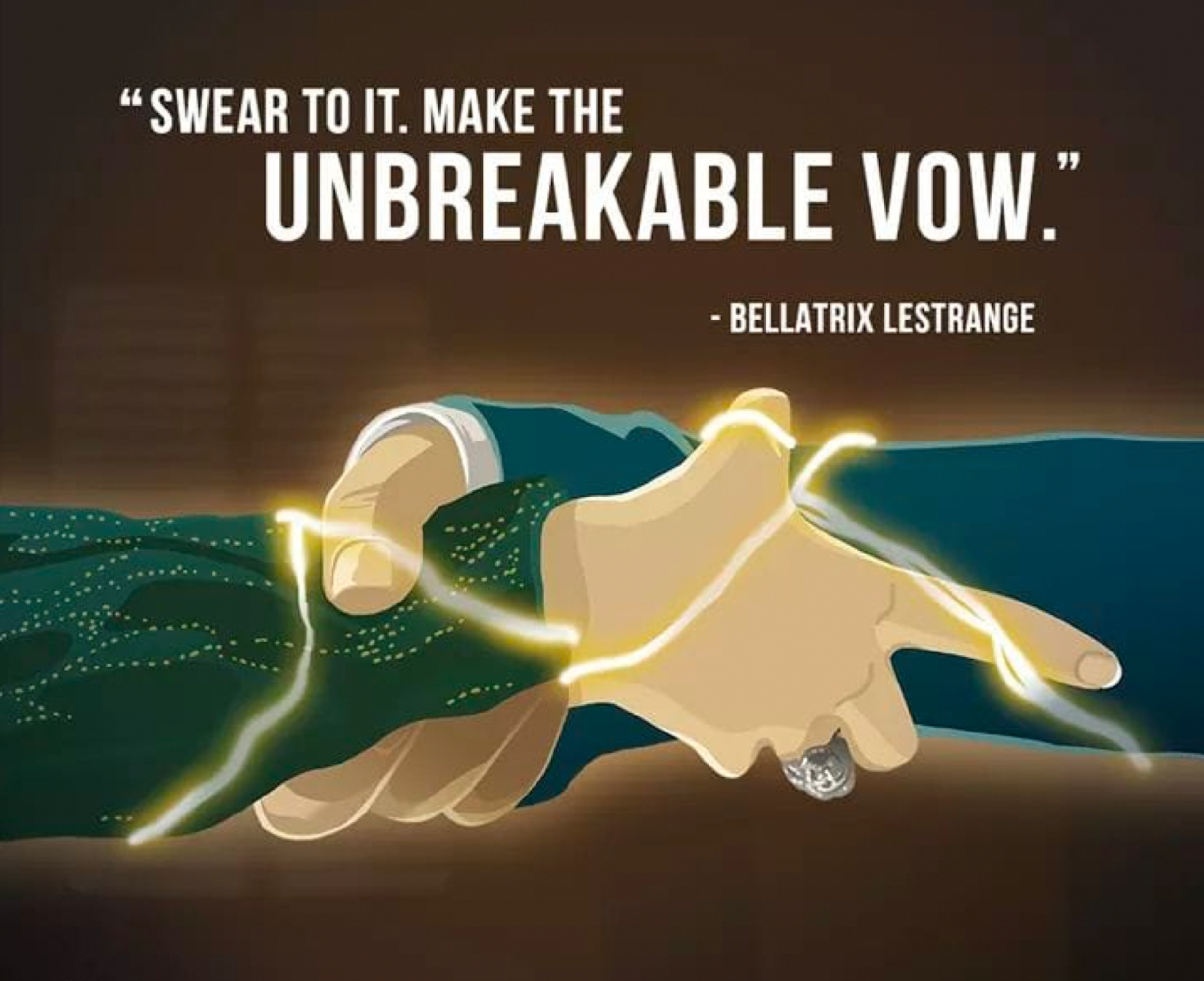

Rollups do not have to concern themselves with consensus or data availability in the same way a highly-decentralized L1 does; they are free to make any and all sacrifices on these properties, because rollups are cryptographically tied to Ethereum. In other words, rollups are created by making a transaction on Ethereum to a set of rules that that rollup commits to following.

The moment a rollup is initialized, it makes a cryptographic promise to Ethereum that it will follow the rules.

This ‘initial vow’ of rollups sets its own limitations on how transactions are managed (i.e, the rollup commits to showing mathematical proofs that all transactions are legitimate), and is how Ethereum’s L1 security is bridged to a rollup, without also porting over its baggage of slow consensus and limited data availability.

Contained inside this rollup-initializing transaction is the ability for any user to exit all their money from the rollup. This is called ‘the escape hatch’, and means that when a rollup ‘breaks’ or turns malicious, you can just hop out of the escape hatch via a transaction on the L1. A broken rollup is like a broken escalator; it just turns into stairs.

The bridge between a rollup and Ethereum exists regardless of whether the rollup is online and functioning, and allows the settlement assurances of Ethereum to extend to the rollups on top of it.

This bridge connects the security and decentralization of Ethereum to the transaction execution environment of the rollup.

With this bridge, each module of Ethereum compliments the other; the security module (Proof of Stake) is added to the scalability module (rollups). The properties of one module imbue themselves into the properties of the other and is how we achieve both scale and decentralization without compromising either.

Rollups cost nearly nothing to maintain and very few nodes are required to be live at any given time and they are also not burdened by expensive consensus mechanisms that are needed for security. Ethereum’s L1 pays for security and maintains decentralization so the rollup doesn’t have to.

Certain flavors of rollups can even be as performant as a centralized server (hello Coinbase, Fortnite, and Facebook, but now with decentralization!). Further innovations to rollups can actually make them even more performant than a centralized database.

Modular Security with PoS Validators

A Proof of Stake consensus mechanism creates an intangible object that is responsible for providing security to the system. That object is the virtual currency that is being staked to the PoS network. The act of using the native currency to validate the chain decouples the association between physical hardware and network security.

No longer are specific computers responsible for network security. Now, all computers can become responsible for network security. Because ETH can be staked on any internet-connected computer, this formally instantiates the value of providing security to the asset itself.

The capital requirements to maintain a physical PoW network can instead be funneled into buying the ‘virtual ASIC’ (the PoS token), increasing the capital efficiency of the asset. Unlike physical hardware, PoS assets don’t deteriorate over time, so little-to-none needs to be sold as operating expenses.

Reducing the economic costs of running a validating node down to the costs of capital (32 ETH) and a computer (the one you're on!) increases the total viable number of possible validators of a blockchain. While 32 ETH is expensive (currently ~$128,000), it is an order of magnitude lower than the smallest viable proof of work mining operation ($10s of millions). Additionally, decentralized staking protocols like Lido or Rocketpool allow for any amount of ETH to be pooled and staked, making the 32 limit an arbitrary number. The yield you get from owning 3.2 ETH versus 320 ETH is mostly the same and will approach parity over time.

A Proof of Stake network strips away the hardware requirements for validating the chain, making the average consumer device powerful enough to validate the chain. This optimizes the connection between network and hardware.

By minimizing the role of hardware, you maximize the accessibility of the chain, and open up the possibility of validating the chain to the maximum number of people. Proof of Stake minimizes the requirements of network validation down to the absolute minimum: capital.

As a result of Proof of Stake, Ethereum now has two homogenous pools that, when combined together, become one modular pool of network security. This is called the ‘validator pool’, and it's where Ethereum sources its security from.

Ethereum developers have stated a desire to see 10M ETH staked to Ethereum to be considered “secure”. 10M / 32ETH = 312,500 validators.

The granularization of Ethereum’s security into individual validating instances allows these instances to be directed by the beacon chain to wherever these resources need to go, giving Ethereum maximum choice about how to allocate its security resources.

Having a modular pool of security resources available enables Ethereum to modularize its data storage capacity via sharding.

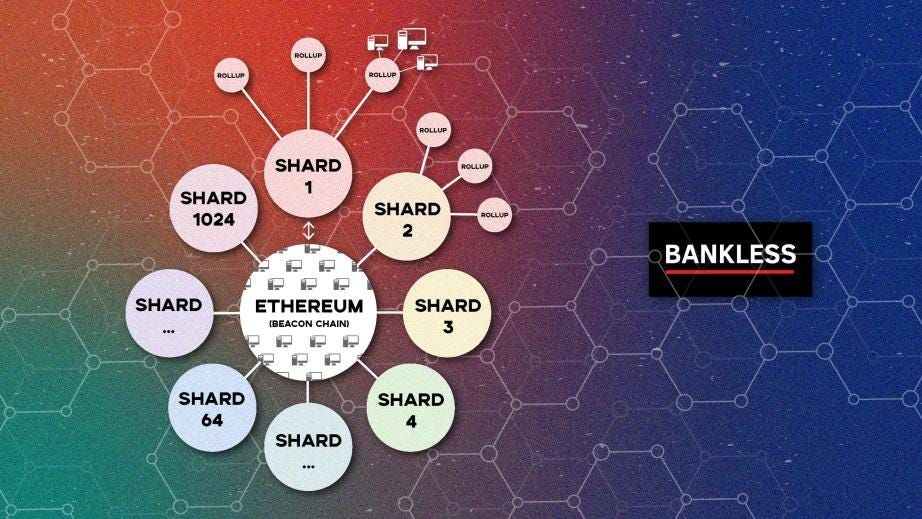

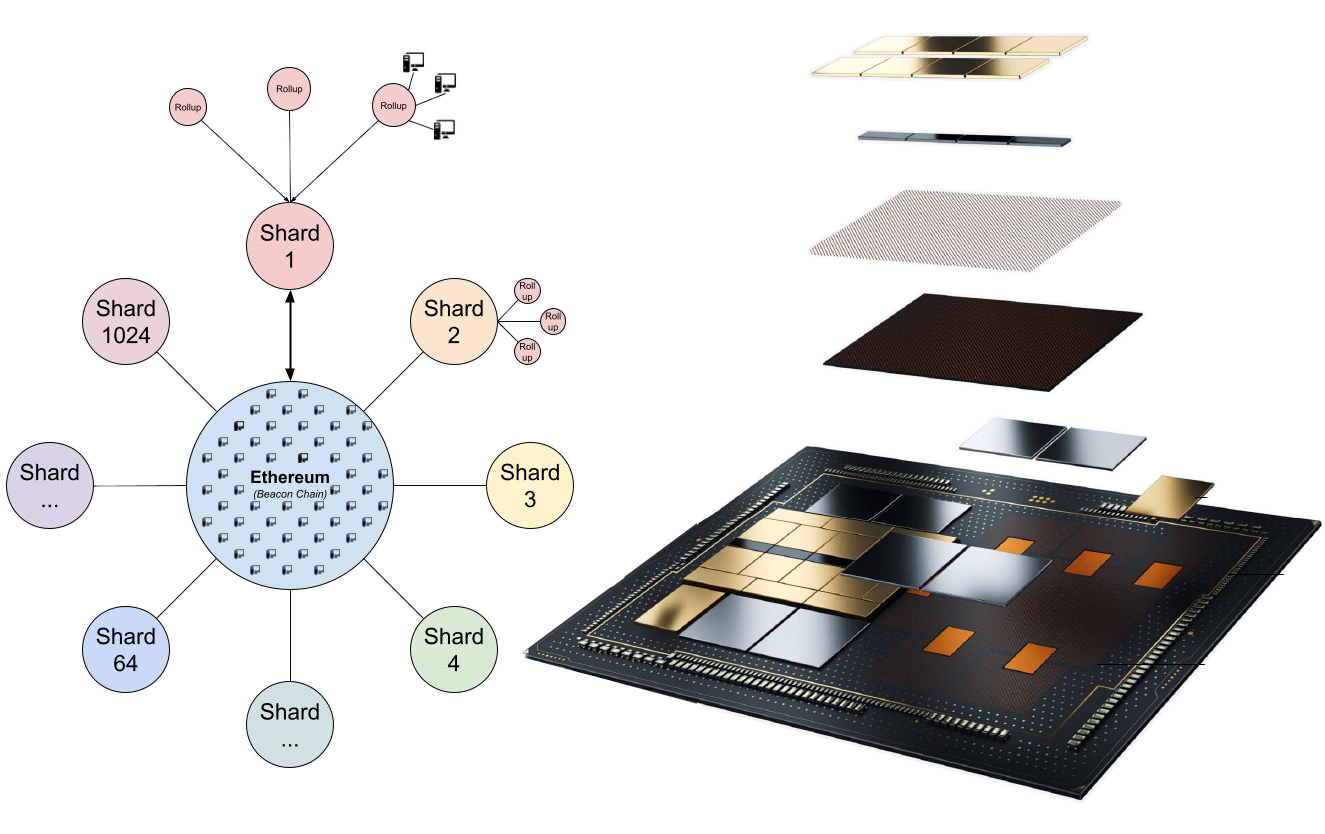

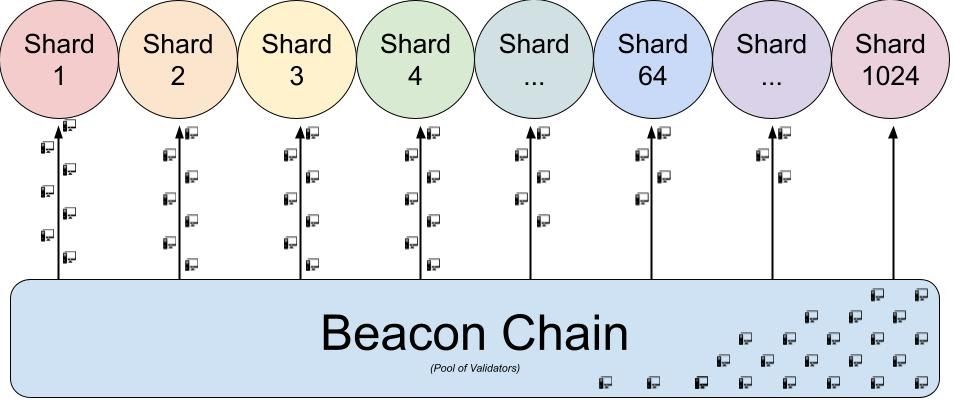

Maximize Data Availability: Sharding

Shards maximize the blockspace available in the L1!

All blockchains have a certain supply of security available to it. The security to Bitcoin is the supply of SHA256 hashes the world can produce. The security to PoS Ethereum is the supply of Ethereum validators that exist in the validator pool.

Ethereum has a ‘validator pool’ of all Ethereum validators that are up for random selection to validate an Ethereum block. When more validators come online and supply their security (32 ETH on the promise to follow the rules) to Ethereum, it makes Ethereum more secure.

When you add in sharding, it will also make Ethereum more scalable. Sharding allows for a ‘redistribution of security’ across a higher number of chains, rather than having all the system’s security wholly pointed upon one single chain.

Having 300,000 validators (300k instances of 32 ETH staked) all securing one monolithic chain is an overabundance of security, and is an inefficient allocation of resources. By spreading out validators across multiple chains, Ethereum’s L1 can scale by creating 64 Ethereum’s by having ~4,500 validators on each one.

This makes Ethereum’s scalability positively correlated with its security. While a monolithic blockchain approaches the limits determined by the blockchain trilemma, a sharded blockchain inverts the relationship between scale and security; it turns its limiting factors into its growth factors.

Sharded Ethereum is slated to have 64 shards at the start, but the goal is to get it up to 1024 shards. Additionally, as Moore’s law progresses and all of our household computers get more powerful, shards can increase in both quantity and capacity.

Having 64 shards at the genesis of sharding won’t mean that we’re increasing Ethereum’s capacity by 64x. Rather, the number of ‘Ethereum chains’ we have will go up by 64x, but the size of each chain will be ~⅓ as large, so around an ~18x increase in size, not a 64x.

But, as stated above, as physical hardware improves and Ethereum’s validator pool increases, we can increase both the size and supply of shards, which importantly links Ethereum’s scalability to Moore’s Law.

Synergies between Optimized Modules

The beauty of the modular design is that the optimizations of each module amplify the optimizations in the others.

There are three synergies:

- Modular PoS security can redistribute validators across more shards, as more validators come online and can securely support additional data. More decentralization ➡️ more scale.

- Additional shards on the L1 have amplified effects upon the execution capacity of rollups. Rollups can compress a lot of data before it adds it to an L1 shard, so any additional space that shards have has outsized impacts on the space available on rollups. More scale ➡️ faster execution.

- The more net transaction activity that happens on the rollups, the more total fees are paid for buying L1 blockspace. More total fees paid for blockspace increase the revenue paid to L1 validators. The more validators are paid, the larger the incentive there is to spin up additional validators. Adding more validators to the L1 adds computational resources that can create more shards. More shards? See step 2.

More Scale, Faster Execution

By sharding Ethereum into 64 different data availability layers, we create much more space for rollups to deploy their bundles of thousands and thousands of transactions. Sharding the L1 has outsized impacts to the scalability of Rollups on the L2. Because Rollups compress transactions into compact bundles, any increases of data on the L1 create orders of magnitude more space on the L2.

This is where Ethereum gets micro-transaction capable. Sharded Ethereum is where the floodgates open for all rollups. Increasing the amount of block space that’s available to consume creates massive fee reductions of the rollups on top of the shards.

The compressed rollup transactions (think: zip file!) now have much more supply of available block space. Rollups amortize the cost of their L1 transactions across all the users of the rollup. If it costs 1 ETH to deploy a big bundle of transactions to Ethereum, it will spread that 1 ETH cost over the thousands of transactors in the bundle. When we have 64x more shards to deploy transactions upon, the costs per transaction should drop by multiple orders of magnitude. Increases number of amortized users

Once this occurs, rollups are free to stop constraining their own amount of available block space as they currently do, and let the engine really rev.

The combination of shards and rollups allows for computational resources to become assets to the network, rather than liabilities. More computers, of any computational power, can always contribute their resources to the network and have those resources become effectively utilized, regardless of how much resources the computer has available to give. A computer can either be a rollup validator, and help compress data sent to the L1, or it can contribute its resources to the L1 validator pool and help spin up more shards.

Adding your node to a monolithic blockchain adds another bottleneck that the network must get through. A monolithic blockchain cannot process more transactions than a single node can. Since all monolithic chain nodes process all transactions, adding your computer to the monolithic network simply adds just another computer that needs to be able to keep up with the network.

Economic Sustainability

Modular Ethereum is an economically sustainable Ethereum. This is the industry of crypto-economics, and we need to get our economics right in addition to our cryptography.

In economics, Gresham's law is a monetary principle stating that "bad money drives out good". When someone is presented with two different monies, they will save the one that retains its value, and spend the one that loses its value.

With fiat currencies, we see people fleeing to the currency that is losing its value the least, aka the dollar. But now, in the world of ‘sci-fi economics’, we can dream bigger dreams than just ‘not losing value. Instead, in the crypto world, we will ask “what currency is grows in value the most?”

Bitcoiners are very excited about BTC as the first hard-cap money that is promised to retain its purchasing power by being immune from further issuance. Bitcoin promises to become more scarce as the economy grows around it.

The same supply of BTC, but inside of a bigger economy, equals comparatively more scarce BTC.

Ethereans are excited about ETH and its ability to be burnt as a function of demand for the Ethereum network, and the possibility of becoming deflationary as a result of burning more ETH than what is issued via EIP1559.

A bigger economy equals a higher ETH burn rate, which creates an increasingly scarce ETH.

Transaction Fees = Monetary Premium

Turning Greshmans law into crypto-economic terms: networks need to collect more transaction fees than what is issued to validators.

Crypto-economic networks pay their security providers via issuance and fees. Using fee revenue to pay for security displaces the amount of issuance that is required. The more fees a blockchain collects, the less it needs to inflate supply through issuance.

Collect more, issue less.

Herein lies the problem with scaling a monolithic blockchain. Many blockchains promise low fees and high throughput. By committing to this, they simultaneously are committing to never creating a meaningful fee market. If you want block space to be cheap, you must not rely on transaction fees to pay for security.

Therefore, you must rely on issuance, which in Gresham’s terms, would be called “bad money”.

Let’s consider Polygon PoS and Solana.

Polygon PoS is collecting roughly $50,000/day in transaction fees, or $18M annualized. Meanwhile, it’s distributing well over $400M in inflationary rewards. That’s an incredible net loss of 95%.

As for Solana, it collected only ~$10K/day for the longest time, but with the speculative mania it has seen a significant increase to ~$100K/day, or $36.5M annualized. Solana is giving out an even more astounding $4B in inflationary rewards, leading to a net loss of 99.2%.

As a thought experiment, Solana would need to do 154,000 TPS at the current transaction fee just to break even — which is totally impossible given current hardware and bandwidth.

The bigger issue, though, is that those additional transactions don’t come for free — they add greater bandwidth requirements, greater state bloat, and in general, higher system requirements still.

The critical feature of economic sustainability is that it compounds in both directions.

A constrained layer 1 establishes a strong fee market. By limiting the amount of available block space, you increase both decentralization (by reducing the hardware requirements of participating nodes) and fee revenue capture (by limiting the supply of available block space).

Scarce block space creates high fee revenue, which generates a high ETH burn rate, which makes ETH more scarce, making it more valuable.

The more valuable a currency is, the less is needed to issue to achieve the same impact. Therefore, security actually becomes cheaper to pay for when the value of the currency is high. Under a cheap security paradigm, you further reduce net new issuance because you are simply issuing less, and this further compounds the scarcity and value of the asset.

Going in the other direction, it all unwinds.

Chains that advertise a cheap fee environment cannot collect any meaningful fee revenue (or else it would have fees). If you cannot collect fees, you must pay for security via issuance. If you pay for security via issuance, then the currency inflates and leaks value over time.

Over time, being an inflationary currency is adding supply to the currency, and reduces its value. Reduced value means you must issue more to pay for security. Further issuance inflates supply, and devalues the money, and represents the beginning stages of an inflationary spiral.

While bull market speculation can mask this effect for a while, there is no escaping economic law. Currencies that issue will not hold their value as much as currencies that burn, and these two paths will result in dramatically different futures.

There is a direct correlation between the throughput of the L1, and the soundness of the money that powers it.

If you juice up the throughput of your chain, you increase the inflation of the asset. Sadly, when you also increase the throughput of your chain, you reduce the ability for the average person to become a validator.

This separates the community that surrounds this blockchain into two classes of citizens; the ones that have the means to validate the chain and have rights to the seigniorage, and those that don’t and can only purchase what the validators sell to them.

Tying it all together

Ethereum has a constrained L1 that optimizes for strong decentralization and efficient security. This blockspace constrained L1 creates an expensive fee market that adds monetary premium into ETH.

Sharding increases available L1 block space as a function of the size of Ethereum’s security. As Ethereum’s pool of validators grows larger, the number of viable shards also goes up, making Ethereum more scalable as it grows in decentralization.

Rollups create unconstrained execution environments that bundle-up transactions and compress them into the tiniest possible packet of data. This unlocks new types of economic activity and allows a vibrant and cheap economy to flourish, increasing the net economic activity that settles on the L1. As more economic activity develops on the rollups, rollup fees drop as they are amortized across a wider set of participants. As more shards are added to Ethereum, and as shards become larger, rollup fees continue to lower as a function of Moore’s Law.

Unlocking microtransaction viability increases the total amount of viable economic activity that can be supported, allowing net economic activity orders of magnitude more headroom to operate, which is all funneled down to the L1 Ethereum via a series of compressions and commitments across various layers, and all collapses to the competitive fee market on the L1, burning ETH as a function of total economic activity.

The beauty of the modular design is that the optimizations to each module amplifies the optimizations to the others.

- Increasing decentralization via PoS increases the number of shards added to Ethereum

- More shards on the Ethereum L1 adds orders of magnitude more scale to the rollup L2’s

- More scale on rollup L2’s unlocks newly viable economic activity, which ultimately adds more collective fees paid by the rollups to the L1.

- More collective fees paid to the L1 increases the incentive to run a validator, making the pool of validators larger, allowing for more shards to be created.

- Repeat.

Execution-Optimized Monolithic Blockchains

Every bull market, there comes a new cohort of chains that elect to sacrifice decentralization in order to optimize for the execution property of a blockchain. They increase the blocksize and constrain the nodes so that the exuberance of the bull market can find a home with cheap fees.

In bull markets, Ethereum and Bitcoin become extremely congested, because they have optimized for decentralization, which gives legitimacy to the chains that optimized for the opposing property: transaction execution.

As discussed above, monolithic blockchains that optimize for execution have committed to a few shortcomings. They cannot meaningfully collect fees and have sacrificed decentralization.

If this execution-optimized monolithic blockchain instead rolls itself up into an L2 on a different L1 chain, it could actually be even more optimized for execution, while not having to deal with the constraining factors of security and decentralization. The L1 asset no longer needs to be issued to pay for security, since security is derived from the L1.

Eliminating the inflation from the supply schedule allows the smaller gas markets to have an outsized impact upon the long-term supply of the native asset.

Chains like Solana, Binance Smart Chain, Avalanche, and Polygon all may need to ‘rollup’ themselves in order to drive long-term sustainability to their token, and in fact the sooner they roll themselves up, the scarcer their native asset can become.

I used to think this is the most pragmatic approach, but I now think there’s too much capital and hubris invested in monolithic projects for them to take this rollup-only approach any time soon. The one that does will be a pioneer and gain immense network effects, though.

The Logical Conclusion

The world of crypto is rife with tribalism and politics. Statements uttered by one person in crypto is tainted by which tribe that person comes from. Incentives and motivations are driven by pre-existing beliefs and bag bias.

Thankfully, code and math are immune from all of these things. The entire article above is able to be rewritten without using the word ‘Ethereum’, and instead could be an agnostic roadmap for a generally optimized modular blockchain.

In fact, this architecture is not being executed by Ethereum alone. Rollups are not just an Ethereum thing; Tezos is also embracing a rollup-centric roadmap. NEAR is also designing for sharded data availability. Celestia is building a security & DA layer exclusively for rollups.

The point is if we went back in time, or hopped to different parallel universes and re-rolled the dice 10,000 times, the crypto industry would find itself at a modular design conclusion 99.9% of the time.

It’s the most logical conclusion for the development of blockchain technology. The only reason why it has been ‘politically linked’ to Ethereum is that Ethereum has been the only ecosystem to adequately fund R&D efforts that’s able to bring us to this point.

Over time, we will see all L1 blockchains either morph into a modular design structure (constrain L1 blockspace, push execution to rollups, increase node count), and compete in the world of global non-sovereign money, or they will instead do away with the baggage of consensus and data, and instead simply port their execution environment over to a more decentralized chain.

The modular blockchain design also illustrates the necessity of enshrining decentralization as the key property of blockchains that enable all the rest of the features. Ethereum has solved the scalability trilemma by increasing decentralization, not sacrificing it. Only by optimizing for decentralization are you able to receive the benefits of modularization illustrated above.

If you have decentralization, you can have anything.

Action steps

- 🔺 Understand the constraints of the blockchain trilemma

- 🛣️ Recognize why a modular design is the optimal scaling roadmap

- 🎧 Listen to this article in audio format