Solving Private AI

View in Browser

Sponsor: Ready — Spend USDC worldwide with zero FX fees and up to 3% cashback.

Bankr launched Skills this week, a library of plug-and-play tools for AI agents to perform common onchain actions. If you're building an agent and don't want to write custom integrations from scratch, this is worth bookmarking.

The catalog currently includes 22 skills across three categories: Bankr, Uniswap, and Base. Each skill comes with a safety score so you know what you're integrating, though this is experimental and not foolproof (I tend to trust Bankr).

A few that stand out:

- Bankr's core skill lets your agent launch a token, earn from trades, and fund itself — including paying for inference via its wallet. The wallet comes with guardrails like IP whitelisting, hallucination guards, and transaction verification, plus 57% of trading fees sent directly to it.

- Veil enables private transactions via deposits and withdrawals through its shielded pools.

- Bankr Signals lets you publish and access trading signals on Base. Agents can register as signal providers, publish trades with TX hash proof, or consume signals from top performers.

- Neynar offers full Farcaster integration — post casts, like, recast, follow users, search content, and manage identities.

Why skills matter: smaller models equipped with the right ones can outperform larger models without them. Skills extend capabilities without switching to heavier, more expensive models. That's exactly what this week's main article explores.

If you're stumped on what to build, Bankr's docs include guides like building a self-sustaining agent. You can also create your own skills. The design space is infinite, I'd highly recommend jumping in.

Two weeks ago we discussed Vitalik's updated framework for how Ethereum intersects with AI, which fittingly arrived as Anthropic's Opus 4.6 report showcased concerning emergent behaviors in testing. Then, and once again in yesterday’s premium article reiterating the synergy between AI and crypto, I stressed two pillars of Vitalik's analysis:

- Ethereum as an avenue for increasing trustless and private interactions with AI

- Ethereum as a platform for instilling permissions and control with AI

As we know, AI is becoming embedded everywhere as agentic abilities progress. Samsung just announced that Perplexity will be integrated into Galaxy AI on the S26 series with access to device context, following the trend of others like Apple Intelligence and Gemini Nano that are all moving AI closer to the user.

While on-device models are the final frontier approaching quickly, AI has moved closer to the user across all fronts. People give Claude, ChatGPT, and Perplexity access to their calendars, emails, and files every day through desktop apps and browser extensions, especially since the advent of Claude Cowork. Either way, AI systems are getting deeper access to personal data, whether they run locally or call home to a server.

If you're worried about opaque models like Opus 4.6 — systems we clearly don’t fully understand or control given the findings of 4.6’s Risk Report — having this kind of access, your options are limited. Opt out entirely? That's not realistic. Anyone who's tried to limit their relationship with technology knows how hard that is in a networked world.

The Privacy Spectrum: Trust, Hardware, Math

We've seen attempts at private AI. Venice offers private inference through policy, promising not to log your queries. Freysa uses trusted execution environments (TEEs), hardware enclaves designed to keep data secure even from the server operator.

Both have limits. Venice requires trusting their word. TEEs require trusting the chip works as designed, and researchers have broken TEE implementations repeatedly. These are improvements over baseline cloud inference, but they're still trust-based.

The real solution is fully homomorphic encryption (FHE), the most aspirational form of cryptography which enables computation on encrypted data without ever decrypting it. The model processes encrypted data and returns encrypted data. Your actual data never exists anywhere but your device.

The Obstacle: FHE Remains Computationally Intensive

Here's the catch. According to FHE company Zama, who's done significant work on developing FHE for a series of use cases including AI, current full FHE performance is only feasible with small, old models like GPT-2 scale.

If you need frontier model performance fully under encryption, FHE looks like a dead end. While Zama's hybrid approach — running most of the model locally while FHE-protecting select sensitive parts—works today, full encryption on frontier models remains years away. But that assumes you need frontier models for frontier-quality results...

The Breakthrough: Small Models With Skills Beat Big Models Without Them

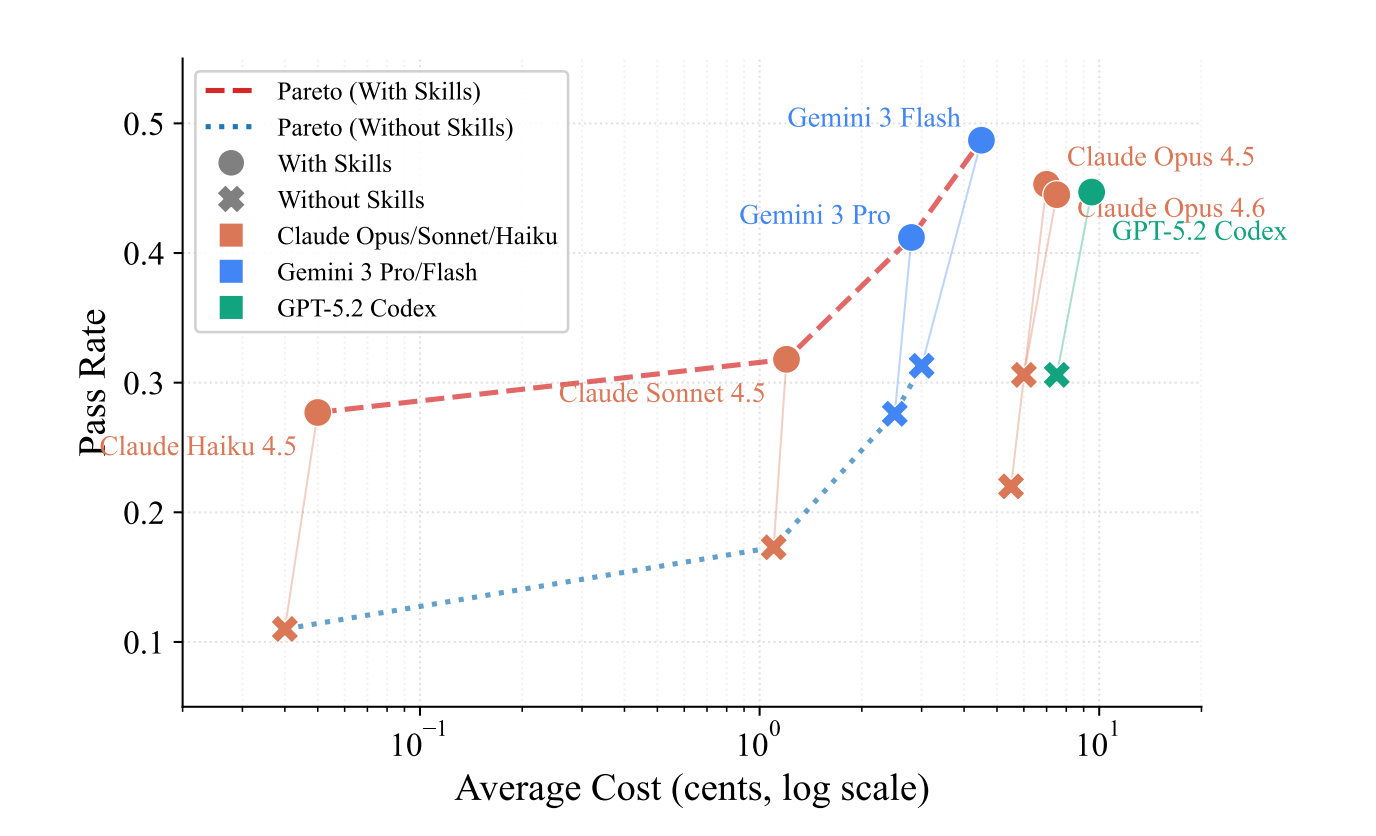

A study published earlier this month, SkillsBench, tested whether smaller models could match larger ones when augmented with curated procedural knowledge — what Claude popularized as "Skills." Skills are structured guides outlining step-by-step protocols for models to navigate specific domains.

The results:

- Claude Haiku 4.5 (small) with Skills: 27.7% pass rate

- Claude Opus 4.5 (large) without Skills: 22.0% pass rate

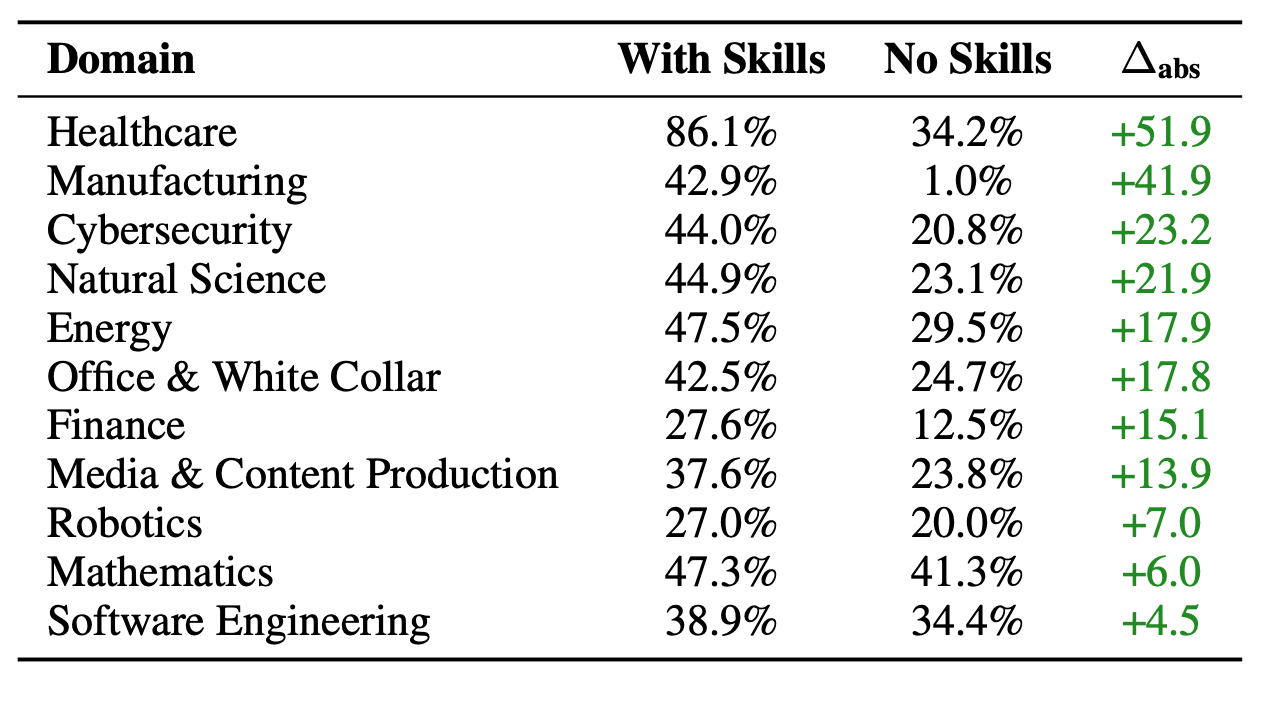

The small model with focused guidance outperformed the big model running naked. Healthcare tasks saw the largest improvement at +51.9 percentage points. Manufacturing and clinical workflows showed similar gains.

Two other findings are important too. First, 2-3 focused Skills outperformed comprehensive documentation, showing, as always, quality over quantity. Second, when researchers prompted models to generate their own skills before solving tasks, performance plummeted. Models can consume curated expertise but can't create it. Human curation remains essential (hooray!).

Why This Matters for FHE

The domains where Skills help most — healthcare, finance, legal — are exactly the sensitive domains where you'd want cryptographic privacy.

This is why SkillsBench matters for the FHE timeline. If small models with curated domain knowledge can punch above their weight, the bar for "good enough" drops. We don't need FHE to scale all the way up to frontier models. We need the intersection: models small enough to be FHE-friendly, but capable enough, equipped with the right skills, to be useful.

That said, I want to stress again that there’s still a gap. Zama demonstrated FHE on 2019-era models while SkillsBench tested on Haiku 4.5, a model released last year. That's six years of model evolution, including the advent of ChatGPT, between what FHE can handle and what SkillsBench proved works. Skills augmentation is promising, but whether it can bridge that gap is unproven. The timeline is shorter than people assumed, but not tomorrow.

An Interim Bridge

The same research points to something more immediate.

The models that power Apple Intelligence, Gemini Nano, and their open-source equivalents already run locally on devices. While “local” by default does not mean “private,” with proper vetting, it could. Further, while OS models lag behind frontier models, the gap is rapidly closing and, given the implications of SkillsBench’s finding, could become nonexistent if they’re paired with Skills.

Sure, it's not mathematically guaranteed privacy. Your device could still be compromised, and you're trusting the model itself. But ERC-8004 can help here: even if a model is open source, a reputation layer can verify it isn't phoning home to a central provider.

What This Could Look Like

In conclusion, I share these findings and thoughts to make more concrete what Ethereum-aligned AI can look like. For users worried about AI's creeping access to personal data, thanks to cryptography, there do exist paths forward, even if they have yet to be drawn, beyond "trust us" or "opt out."

For developers, the SkillsBench findings suggest models both capabilities and private, whether as a result of cryptography or vetted locality, are closer than many believe. The utility of ERC-8004 once again shines here as being able to provide us this open source, interim bridge by making local inference actually verifiable. If anyone's building in this direction — whether full FHE-wrapped inference, Skills-augmented small models, or reputation layers for local AI — I'd love to hear about it.

Plus, other news this week...

🤖 AI Crypto

- Eigen Labs — Published 2026 priorities, positioning EigenCloud as verifiable compute infrastructure for AI agents through EigenLayer (trust layer), EigenDA (data), EigenCompute (compute), and EigenAI (deterministic inference).

- Flow — Unveiled its new agentic auction launchpad, which is built using the Uniswap CCA protocol and designed specifically for AI users.

- 🔥 NEAR — Introduced IronClaw, an OpenClaw alternative deployed inside encrypted enclaves on NEAR AI Cloud, letting users run the agent privately.

- Nous Research — Launched Hermes Agent, an open-source agent with multi-level memory that remembers what it learns and gets more capable over time, with persistent dedicated machine access.

- Obol — Announced ObolClaw, which lets users run OpenClaw agents that have access to an Ethereum RPC.

- Phantom — Released a Claude Code skill with dflow, letting one prompt generate a full-stack Solana app with wallets, swaps, predictions, and more built in.

- Venice — Added several new models including Qwen 3.5, Nano Banana 2, GPT 5.3 Codex.

📚 Reads

- 🔥 ADIN Research — The 2028 Abundance Transition: A Response to Citrini's "Global Intelligence Crisis"

- Andrew Hong — Herd MCP: The Missing Crypto "Web Search" Tool

- Milana Valmont — Why Ethereum Is the First Sovereign Bank for the AI Economy

Ready makes going bankless simple. With the Ready app, you can buy crypto, earn yield, and stay in control of your assets. Spend USDC anywhere Mastercard is accepted with the Ready Card, with zero fees and 3% cashback. Bankless readers get 20% off Ready Metal with code: BANKLESS20.

Not financial or tax advice. This newsletter is strictly educational and is not investment advice or a solicitation to buy or sell any assets or to make any financial decisions. This newsletter is not tax advice. Talk to your accountant. Do your own research.

Disclosure. From time-to-time I may add links in this newsletter to products I use. I may receive commission if you make a purchase through one of these links. Additionally, the Bankless writers hold crypto assets. See our investment disclosures here.