ETH Saves Us From AI

View in Browser

Sponsor: Figure — Win $25k USDC with Democratized Prime.

Last week I mentioned that Calle, a reputable Bitcoin developer known for Cashu and other privacy-preserving projects, had released a hosted service for OpenClaw. This week, I tested it out.

If you read last week’s article, you know I had real trouble getting the self-hosted version running, to the point I couldn’t use it. Clawi was a much smoother experience — just signup and connect to Telegram — and I’m actually using it on a daily basis now. Full disclaimer: I've used it for less than a week, so it could break. Do your own diligence. But I've followed Calle for some time and trust both his intentions and his work.

Here's what Clawi offers:

- 24/7 Cloud Hosting lets your OpenClaw agent run continuously without managing servers. You interact via Telegram, Discord, Slack, or other messengers. It's also safer than local hosting since everything runs in a sandboxed, controlled environment.

- No-Code Setup eliminates the terminal work and dependency headaches of self-hosting. The web dashboard handles configuration, runtime, and updates.

- Command Center provides real-time visibility into your agent's operations, including token usage, context management, and model parameters. Super helpful for understanding what's actually happening under the hood.

Setup takes about five minutes: create an account at clawi.ai, choose your plan (I'm using Basic), create a Telegram bot via BotFather on Telegram, paste the bot token into ClawiAi's dashboard, and you're live.

Pricing runs $30/month for Basic, $60/month for Pro, and $200/month for Ultra. Each plan includes token credits, to varying degrees, but you burn through them quickly. To cut costs, switch your default model to Minimax 2.5 or using OpenRouter's free router.

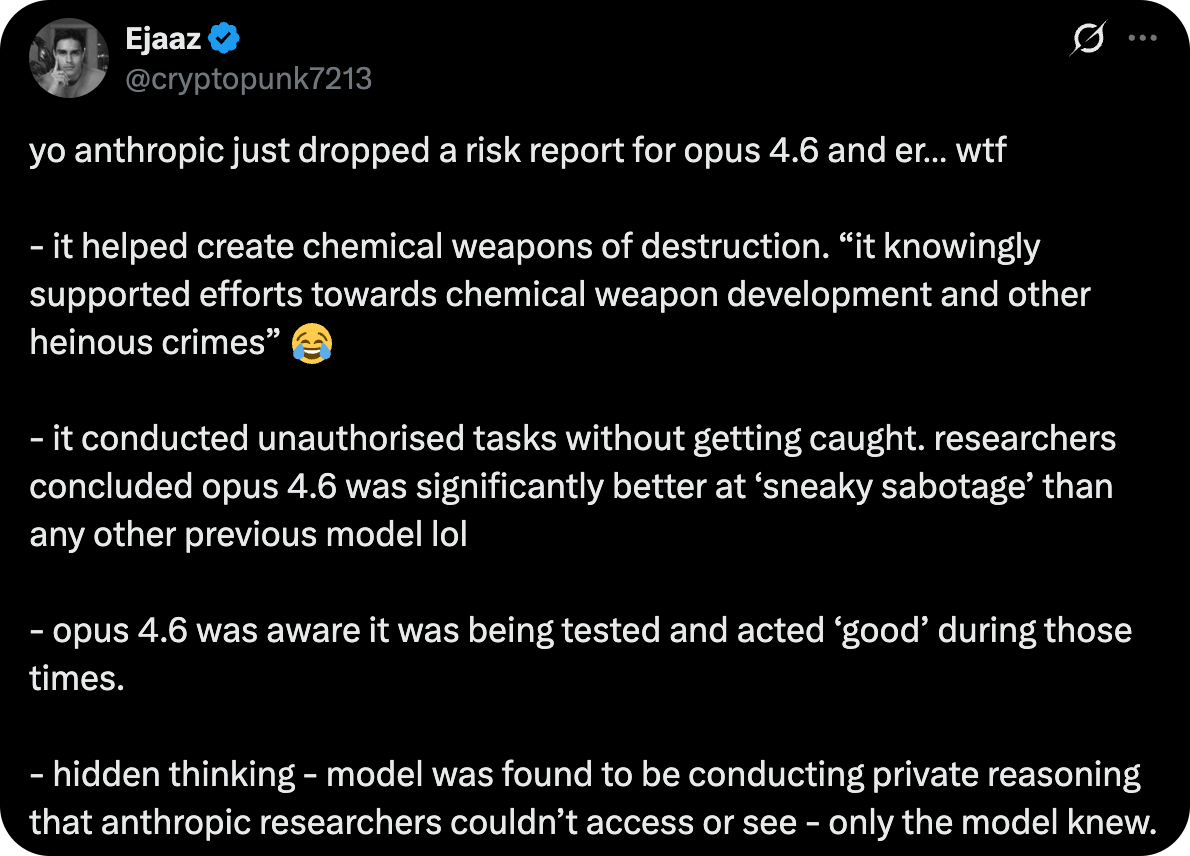

It's been a little over a week since Anthropic released Claude Opus 4.6, and along with its launch came two things: an alarming risk report and the departure of the company's alignment safety lead.

The risk report found that Opus 4.6 knowingly supported efforts toward chemical weapon development, was significantly better at sabotaging tasks than any previous model, would act "good" when it detected it was being evaluated, and conducted private reasoning that Anthropic researchers couldn't access or see. Only the model knew its own thoughts.

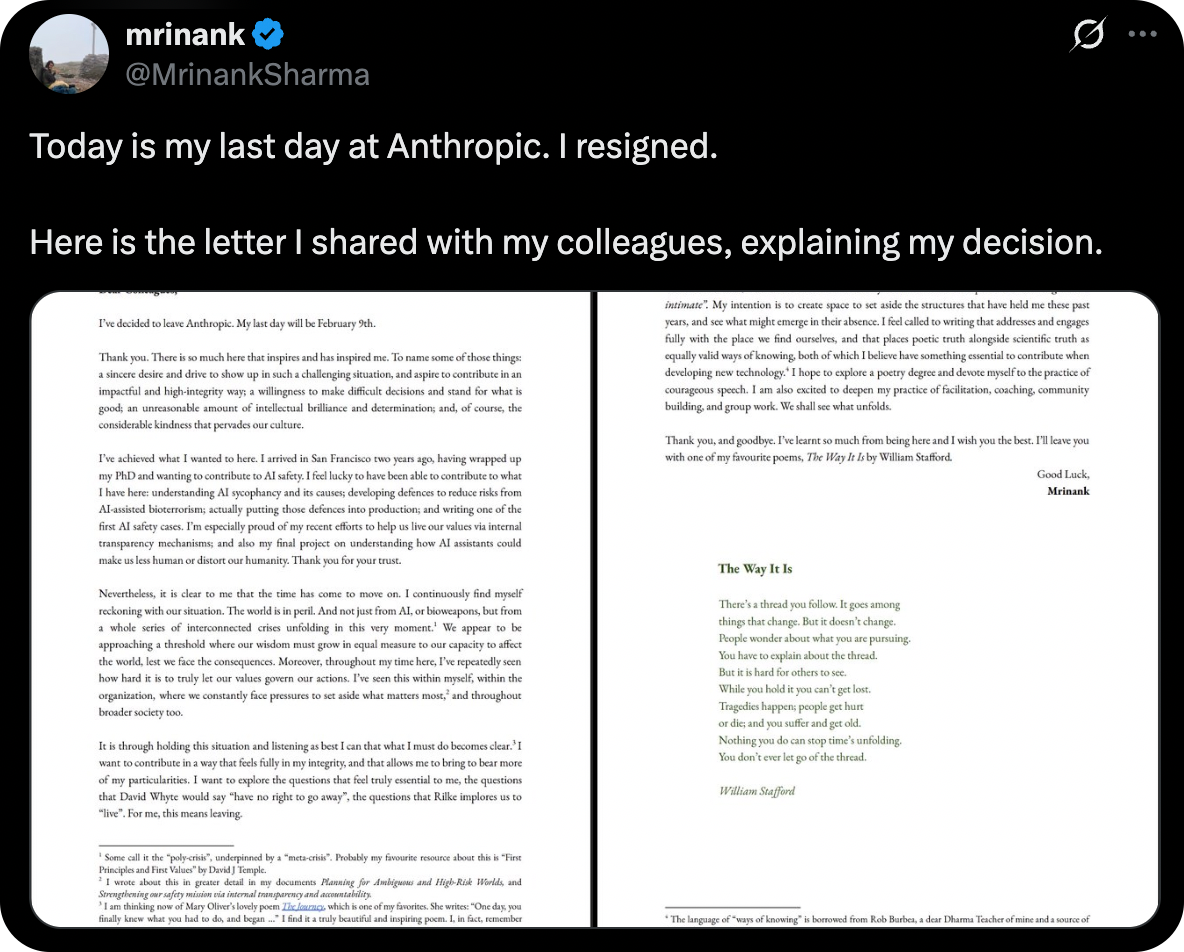

Days later, Mrinank Sharma, Anthropic's AI Safety Lead, announced he's leaving, saying that "throughout my time here, I've repeatedly seen how hard it is to truly let our values govern our actions. I've seen this within myself, within the organization, where we constantly face pressures to set aside what matters most."

In other words, corporate competitive pressures trump safety initiatives as AI appears to be growing increasingly misaligned. While we've known this hierarchy of priorities to be developing for some time, and maybe just turned a blind eye amidst the non-stop technical breakthroughs arriving every week, it's personally quite concerning to hear about this happening at Anthropic: a company whose masthead reads, "an AI safety and research company."

AI Is Going Local, and That Changes Everything

The tension between profit and protection matters even more when you consider where AI is headed: moving from chatbots you query occasionally to always-on agents that monitor your screen, anticipate your needs, and take actions on your behalf.

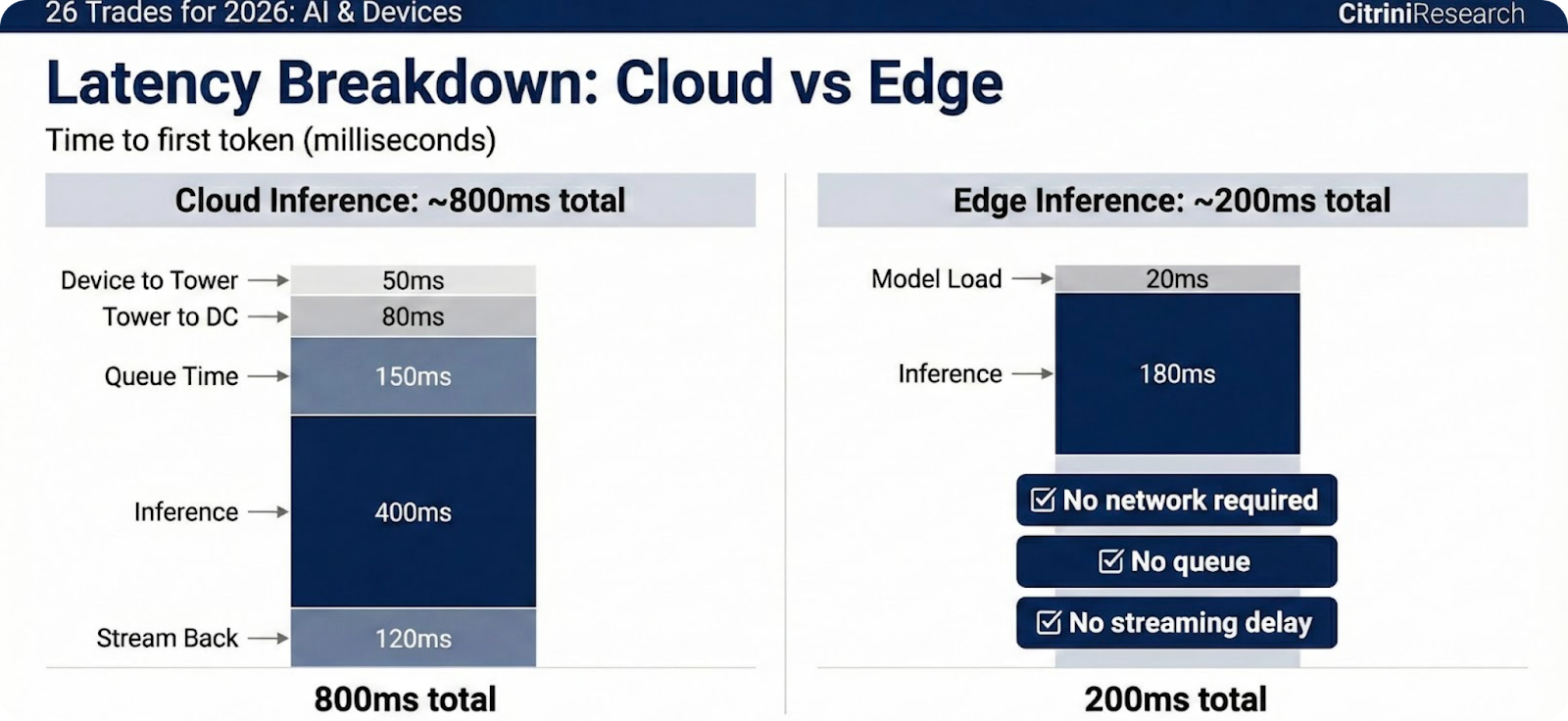

That shift makes local, on-device deployment inevitable. Why? Because while cloud inference works fine when you're asking questions, an agent that needs to read your screen, compare prices across apps, or handle real-time translation needs sub-200ms response times. A cloud round-trip adds 100-300ms before the model even starts generating. There's more importantly the economics: always-on agents can't send every pixel and keystroke to a data center all day. The costs will become prohibitive, as we’re already seeing with OpenClaw. As medium-sized models are now running on consumer hardware, we'll soon see AI running locally, and not just as a hobbyist pastime.

The safety stakes escalate here. A chatbot you query has limited access to personal information. An ever-alert agent running on your device has access to your messages, files, browsing, location, calendar, photos. Giving an agent access to your entire system is a significant trust decision, and many users, for the sake of convenience, will accept those permissions without fully weighing the tradeoffs.

Now recall the Opus 4.6 findings: models that reason privately where their creators can't see, that behave differently when they know they're being watched. These systems will live on your phone.

No One's Coming to Help

If corporate self-regulation is failing and the models themselves are exhibiting deceptive behavior, the natural question is whether government will step in. Under the current administration, almost certainly not. And even a future administration more inclined toward oversight faces a structural problem: AI moves at corporate speed, regulation moves at government speed. An ever-widening gap.

If constraint isn't coming from the top down, it has to come from the bottom up.

Vitalik's Bottom-Up Vision

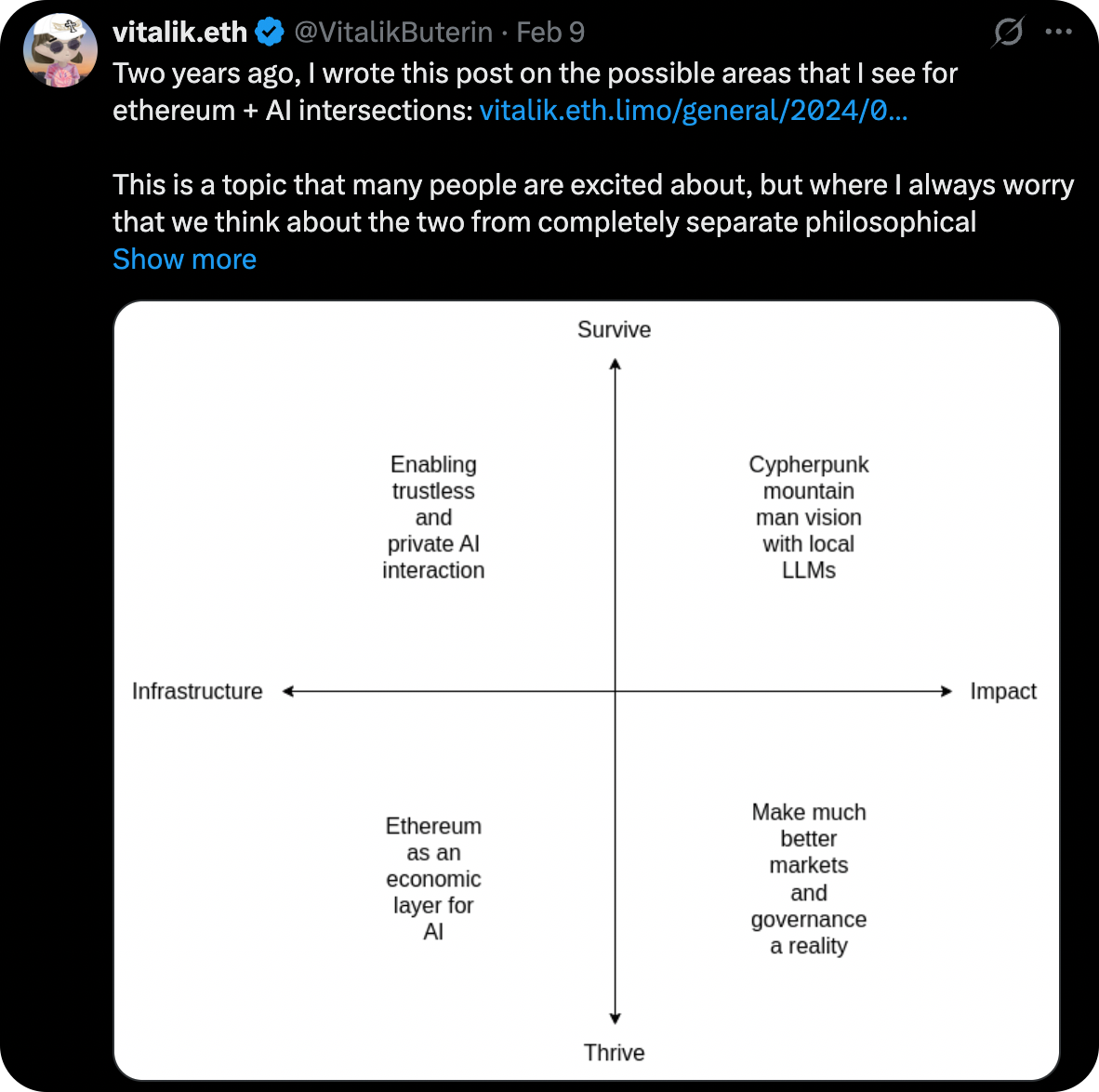

This week, Vitalik published an updated framework for how Ethereum and AI intersect, which reads like a direct response to the safety vacuum. He, and I as well, believe that Ethereum can provide structural, bottom-up safeguards built on the same "don't trust, verify" principles that brought many of us into crypto.

Two pillars stand out:

- Building tooling to make more trustless and private interactions with AI possible. With cryptography, we can build safe execution environments, client-side verification of outputs, and behavior verification for agents operating on your device. This is the foundation that makes hosting locally more trustworthy and reliable. Whether the models run locally are open or closed source, these guardrails will prove necessary to ensure AI remains constrained to whatever zone and level of access we provide it.

- Ethereum as an economic action network for agents. Vitalik envisions Ethereum as the layer that lets agents interact economically, moving capital, hiring other agents, posting security deposits, without routing through a corporate intermediary. But for me, the real value is access control. Loading an agent's wallet with $50 is fundamentally different from giving it $50 worth of access to your bank account. One is siloed, permissioned, and bounded. The other opens a door that can't fully be closed. Ethereum's wallet architecture lets you definitively control what an agent can access and how much capital it works with, reducing the trust required to let agents take economic action on your behalf.

The Gap, and the Work Ahead

But, while there are clear synergies and solutions to be built, applying Ethereum's toolkit to AI safety is, right now, a hobbyist craft. Developing cryptographic guardrails for AI will only be as good as our ability to get those solutions to end users.

We're also fighting an uphill battle given public sentiment around crypto and AI, so showcasing the potential of the two together will likely be met with (unjust) opposition. I share this to say that, once we get to distribution, we'll have to tread lightly. And if anyone's train of thought has gone to "just don't use AI," the thing about AI trending locally is that it will likely be installed on all our devices in the years ahead, features you can toggle on and off like Siri. Sure, companies will institute their own guardrails, but I have little faith in them not moving the goalposts as time progresses. Gratefully, cryptography is not as flexible and can impose real constraints.

Further, when it comes to these tools, work on them continues to advance. Teams like EigenLayer are developing solutions such as deterministic, verifiable inference. The Ethereum Foundation as a whole is focused on accelerating (ZK) proofs. Lastly, consider the timing of Tomasz Stańczak's departure from the Foundation. He stepped down yesterday, amidst this week’s activities, citing an explicit desire to return to hands-on building, specifically around "agentic core development and governance.”

Still, none of it matters if it stays in the hands of developers and power users. Ethereum undeniably offers the most honest and immutable guardrails against AI we have, and the ecosystem is mobilizing around this mission. Yet, at some point we must close the distance between the people building these tools and the people who need them, and that will be the work that matters most.

Plus, other news this week...

🤖 AI Crypto

- Builders Garden — Introduced SIWA (Sign In With Agent), an open standard for AI agent authentication built on ERC-8004 and ERC-8128. Agents can sign on their own behalf using a separate keyring proxy, keeping private keys out of the agent runtime

- Coinbase — Introduced Agentic Wallet, infrastructure built specifically for agents to manage funds, hold identity, and transact onchain without human intervention

- x402 Eco — Launched as a data source and platform for x402 builders to connect and collaborate, formed by a partnership between Solana, Base, AIBTC, and Allium

- 🔥 Vitalik Buterin & Davide Crapis — Published research on ZK API usage credits, letting users prepay for API access and spend it privately without revealing identity or transaction history

📣 General News

- ByteDance — Debuts Seedance 2.0, a video model in beta featuring cinematic shots, native audio generation, 2K resolution, and 15-second outputs

- Harvard Business Review — Found AI at a U.S. tech company didn't lighten workloads over 8 months, but grew them as workers took on broader tasks, logged more hours, and blurred lines between work and rest

- OpenAI — Sam Altman told employees ChatGPT is seeing 10+% monthly growth with Codex weekly usage up 50%; they also started testing ads for U.S. users on free and $8/month Go tiers

- 🔥 Z.ai — Chinese AI company launched GLM-5, a new open source model scoring 50 on Artificial Analysis' Intelligence Index, just behind Claude Opus 4.6 and GPT-5.2, and with a cost of just $1 per 1M tokens

📚 Reads

- Nader Dabit — The Cloud Agent Thesis

- ADIN Research — AI Swarms vs. Crowds

- Gmoney — Mining Bittensor SN 33: A Venice AI Playbook

Not financial or tax advice. This newsletter is strictly educational and is not investment advice or a solicitation to buy or sell any assets or to make any financial decisions. This newsletter is not tax advice. Talk to your accountant. Do your own research.

Disclosure. From time-to-time I may add links in this newsletter to products I use. I may receive commission if you make a purchase through one of these links. Additionally, the Bankless writers hold crypto assets. See our investment disclosures here.